A tale of bold flight and gentle landings

Strategy guidance for teaching robots to stay within safe bounds - by Nicholas Behr

Just like you, machines learn by exploring: by trying, adjusting, and trying again. Picture a robot arm learning to assemble delicate parts: each movement is practice, each success a small victory. But a wrong move could bend metal, shatter glass, or jam the whole assembly. How would you best mentor it to explore potential strategies boldly, while still keeping it and everything around it safe?

And so, dear reader, our tale begins:

Once upon a time, in a land not so far away, three young birds stood at the edge of their nest, each about to take flight under a different kind of mentoring ...

-

was nudged from the nest and left to learn on his own. He flapped wildly, crashed into branches, tumbled to the ground, and picked himself up repeatedly. After each attempt, he could sense whether he was doing better or worse - soaring felt good, crashing felt bad. Through countless painful crashes, Pip slowly learned which movements worked, but at a high cost in bumps and bruises

-

also learned through trial and error, but her mother watched from nearby. Only when Cora’s current path would certainly lead to a crash - heading straight for a branch with no way out - would her mother intervene with urgent commands: ”Bank left now!” ”Pull up!”. This kept Cora much safer than Pip while still allowing bold maneuvers. But the specific emergency commands meant Cora learned to fly reactively, building skills around following instructions rather than developing her flying intuition.

-

also received interventions only when his current approach would definitely end in a crash. But instead of emergency commands, his mother offered the closest alternative flying technique that would avoid the danger: ”Here’s a similar way to handle landings that would avoid the stall.” This let Kael explore boldly, receiving gentle adjustments that kept the spirit of his own strategy but avoided disaster.

From nest to laboratory, the same principles apply: Machines around us - like our three young birds - need space to explore and learn, but also guidance to keep them safe. We can’t afford to let them crash repeatedly like Pip, nor do we want them learning only narrow fixes for each situation like Cora that don’t teach broader lessons.

Our research develops safety systems that work like Kael’s mother: we intervene only when a machine’s current strategy will undoubtedly lead to trouble, and instead of shouting emergency commands, we offer a close alternative strategy that preserves the original intent. By ruling out dangerous strategies while keeping the spirit of exploration alive, machines can focus their learning on approaches that work. So that the robot arm assembling delicate parts can be both precise and safe, without getting too many bruises from hitting the nearest tree.

Text by Nicholas Behr; illustration generated with ChatGPT

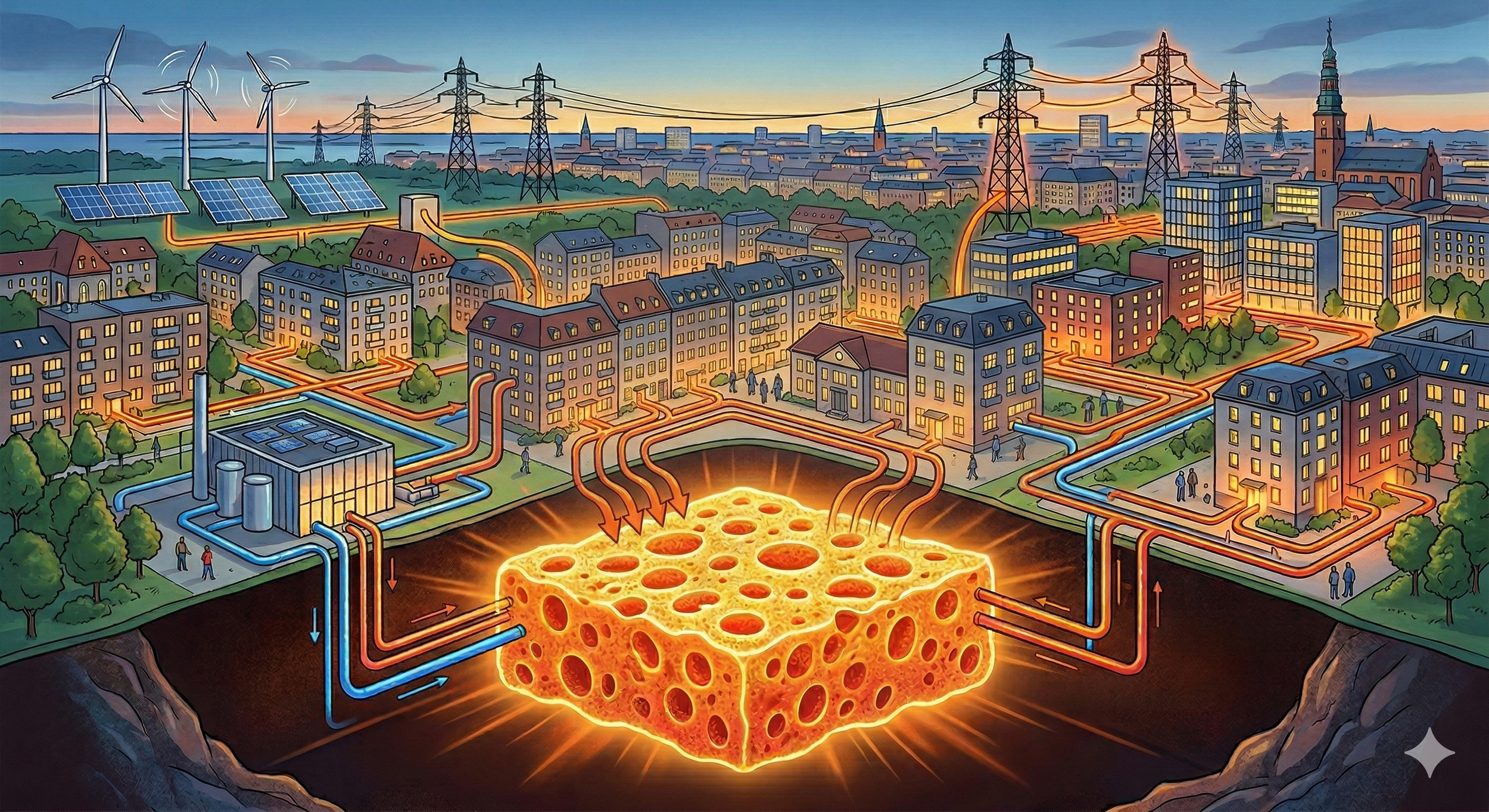

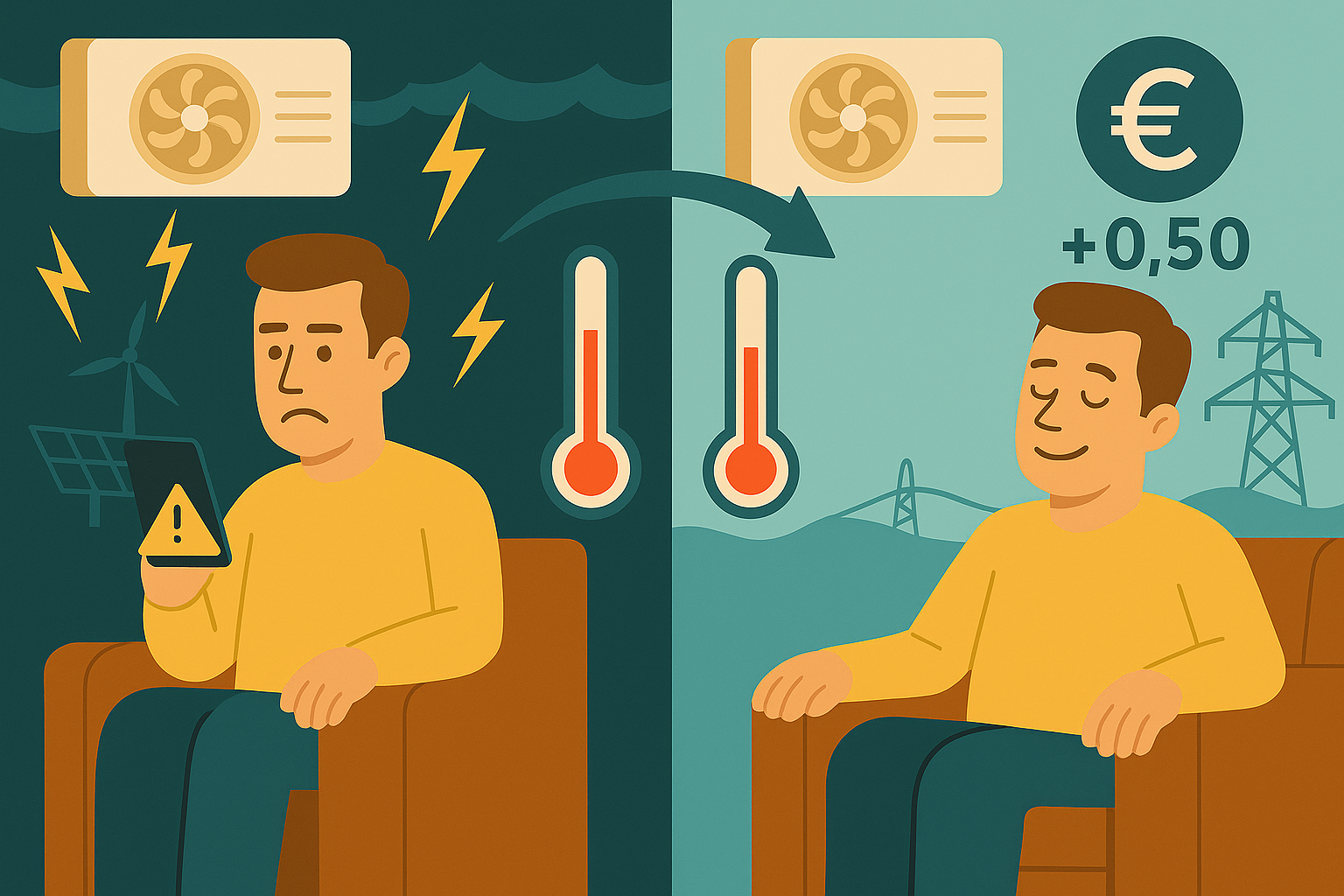

Automation solutions for next-generation electric grids - by Pulkit Nahata